What you need to know about ChatGPT and artificial intelligence

Analysts and pundits predict that Open AI’s new ChatGPT would bring about everything from the “death of the school essay” to the dawn of a new age of communication. But what really is ChatGPT, and how could it change our lives?

Ask ChatGPT a question and it quickly summarizes an answer into grammatically correct and punctuated paragraph. Within two weeks of its November 30 launch, millions of users were trying out the large language-model artificial intelligence app. In fact, it was getting so much attention, the system periodically exceeded its user capacity.

If you ask ChatGPT what it is and how it works, it will tell you: “As a large language model trained by OpenAI, I generate responses to text-based queries based on the vast amount of text data that I have been trained on. I do not have the ability to access external sources of information or interact with the internet, so all of the information that I provide is derived from the text data that I have been trained on.”

As remarkable as ChatGPT appears to be, the system does not “think” and is incapable of coming up with original ideas. It works by closely mimicking human language, packing the potential to make writing tasks quicker and easier in a way never seen before.

“This particular tool is strikingly great in figuring out what a user wants and putting relevant things into a really logical and clear manner, to the extent that some may be fooled into thinking it is sentient,” said Tinglong Dai, a professor of operations management at Johns Hopkins Carey Business School and an AI expert. “. No other AI has been as striking as ChatGPT. It has really opened the window into the latest developments in large language models with something that most people didn't realize was possible before.”

We’ve gotten familiar with some forms of AI already. Roomba can map your house and vacuum your floors, DALL-E will create images from descriptive text, and Siri or Alexa can complete a multitude of tasks with a simple voice command. What makes ChatGPT so captivating is its seamless use of human language. While its essays are impressive, Dai said that the system is not foolproof.

“It’s also very deceptive in the sense that it is incapable of telling whether what it writes is accurate,” he explained. “In fact, just based on my own extensive testing, I found that it makes tons of factual mistakes, but it does so in a confident, authoritative, people kind of a way.”

Dai also noted that ChatGPT’s answers seem become increasing repetitive and even “defensive” when asked the same question over and over again. ChatGPT’s answers also vary depending on the language used to ask the question, because its answers will reflect the language of the source material ChatGPT draws from the formulate its response.

Risks or rewards

Like many tools at our disposal, ChatGPT holds great promise and frightening potential. Will it replace jobs or make it even harder for consumers to distinguish fact from fiction?

Dai explained, “This tool could pose a severe challenge to democracy, because it means that the cost of creating misinformation would become insanely low, such that it's going to be nearly impossible for people to detect AI-created content. Say that you can even make AI more authentic by inserting of typos and other error and biases that make it seem even more authentic and personable. I think that's the scariest part.”

At the same time, Dai said, there will likely be a premium on authentic writing and real thinking, which only humans can provide for now.

“Writing is not just writing; good writing reflects good thinking,” said Dai. “By taking a shortcut, I worry that people may lose that really valuable thinking skill.”

What to Read Next

student experience

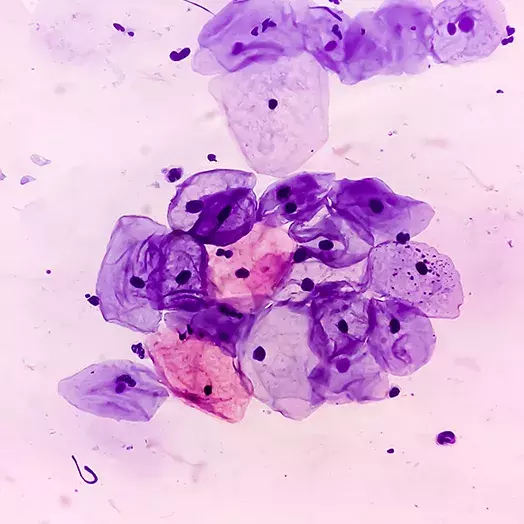

Could artificial intelligence in a “smart tampon” detect cervical cancer?Bringing AI to the classroom

Carey Business School is the only major American business school that requires full-time MBA students to study AI.

“We want them to be intimately familiar with AI theory and what's underlying all the AI tools on the market,” said Dai, who designed the course. “We want our graduates to know they will not be replaced or fall victim to false promises of AI. Instead, we want students to think critically, and learn to use AI to enhance their competitive edge, and what differentiates them from others—humans and AI agents alike.”

So how can we be sure we know the source of what we’re reading? Dai says disclosure is important, and developers need to provide tools for detection, so they know whether they’re reading something a human wrote or not.

“If you can combine a product like ChatGPT with real-time information or even with data not immediately available on the internet, you could imagine billions of people wanting to use it,” Dai said. “I really think this could be at least something as big as social media, or as big as smartphone market. Industries need to figure out ways of working alongside AI in the future. They should look toward being augmented by AI, and not replaced by AI.”